Art and Artificial Intelligence

The robots are coming, for you and your art.

It’s been a big few years for technology and art, and an even bigger few months, with three recent events in particular making waves and headlines across the art world.

At the end of August 2022, San Francisco-based artificial intelligence (AI) start-up OpenAI introduced Outpainting, a new feature of its DALL·E AI-powered text-to-image generator. Outpainting enables users, through text inputs delivered as ‘prompts’ to an AI model, to ‘extend’ an image beyond its original borders. In its announcement of the new feature, OpenAI shared an ‘outpainting’ of Johannes Vermeer’s Girl with a Pearl Earring (1665), commissioned from artist August Kamp. AI-powered image enhancement has been slowly building steam over the past few years, enabling moderate improvements to images (most often increasing the quality of older and/or low-resolution photographs). But this — evidenced in the time-lapse video OpenAI released alongside Kamp’s ‘outpainting’, demonstrating the process — is something else. Once a striking close-up portrait of a young woman, Vermeer’s Girl with a Pearl Earring is ‘outpainted’ by Kamp into a woman captured in situ in her home, surrounded by baskets, flowers, fruit, plants, cups, saucers, and the general clutter of life. And all of this was created, lightning-quick, with just the touch of a few buttons.

Also in August 2022, Théâtre d’Opéra Spatial (2022), an AI-generated image — one of three printed on canvas and submitted by artist Jason Allen — was awarded first place and a small cash prize in a fine arts competition at the Colorado State Fair in Pueblo. You can imagine the ensuing headlines. But while the competition’s judges were unaware that Allen had used the AI-powered text-to-image generator Midjourney to create Théâtre d’Opéra Spatial, Allen broke no rules. The image was submitted in the Emerging Artist Digital Arts/Digitally-Manipulated Photography category under ‘Jason M. Allen via Midjourney’, and judges interviewed amidst the media backlash insisted that even if they had known what Midjourney was and how Allen had used it, they would still have awarded him first place.

And in late 2021, Beijing-based artist duo Sun Yuan and Peng Yu’s Can’t Help Myself (2016) went viral on TikTok. First commissioned for and exhibited in Tales of our Time at the Solomon R. Guggenheim Museum in New York in 2016, it was later presented in May You Live in Interesting Times in the Central Pavilion of La Biennale di Venezia in 2019. Can’t Help Myself is an exploration of authoritarianism and automation by the state, from surveillance to border control. But new online audiences, encountering the work on TikTok without any context, interpreted the industrial robot, visual-recognition sensors, software systems, and cellulose ether in red-coloured water, all behind clear acrylic walls, somewhat differently. They saw an object of pity and abjection — a creature trapped in the Sisyphean task of keeping blood-red fluid within an arbitrary zone inside a glass cage.

From all the software and hardware emerge the eternal questions: “Is this an artist?” and “Is this art?” Well, that depends on what you believe — about agency, creativity, consciousness, the soul and more. So, just the small stuff. Let’s rewind.

Back to the Future

Various art movements throughout the mid-to-late 20th century explored the systematisation and automation of artistic processes and are important precursors to the art-and-AI we know today. Two moments set the scene. First, in 1934, the Museum of Modern Art in New York presented Machine Art, one of the first exhibitions from its newly-minted Department of Architecture and Design. Objects designed by humans but made by machines — from household items to industrial equipment — were presented for the first time as art objects. And, two decades later, in 1956, probably the first human likeness to appear on a screen — a George Petty-inspired female pin-up figure — was presented by an IBM employee on the glowing cathode ray tube screen of a US$238 million military computer.

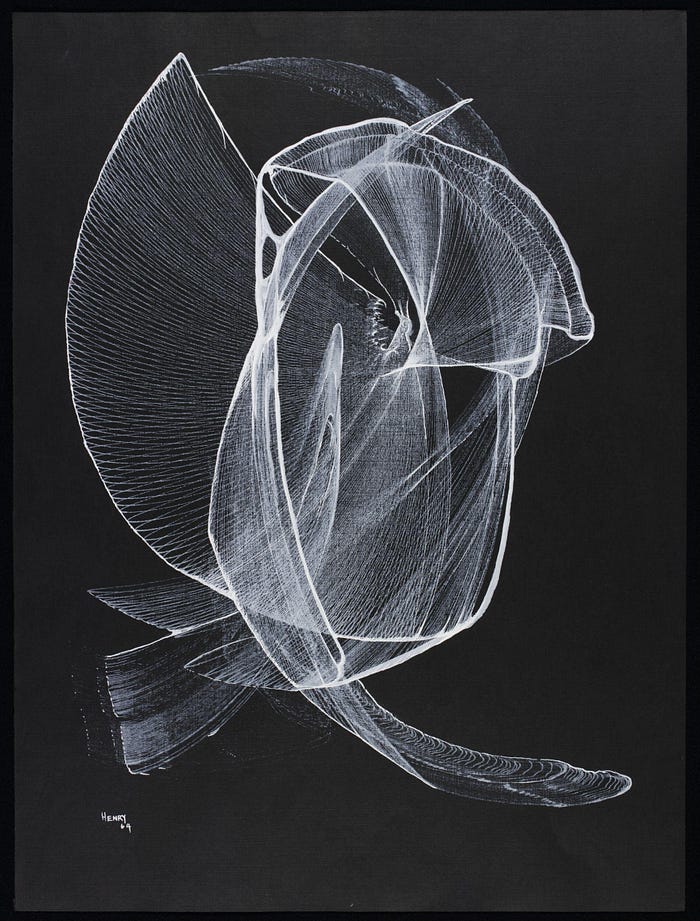

Then, in the 1960s, as computers were becoming more available to the general public, artists working independently across the globe began pioneering algorithmic drawing — including Joan Truckenbrod in America and Vera Molnár in Europe, who were both working in the programming language Fortran. At the same time, artists were pioneering explicitly instructional and procedural artistic production formats, such as Sol LeWitt’s Wall Drawings and the early works of Fluxus artist George Brecht, the legacy of whose instructions on cards endures in the likes of Hans Ulrich Obrist’s do it (which began in 1993 and continues through to the present day). In the mid-to-late 1960s, in Europe and America, institutions began to take notice. Early exhibitions were held at the Technische Hochschule and Galerie Wendelin Niedlich in Stuttgart, at Howard Wise Gallery in New York, and at the Institute of Contemporary Arts in London. At the same time, artists were experimenting with automation through robotic processes, from Desmond Paul Henry’s Henry Drawing Machine (1960) and Raymond Auger’s Painting Machine (1962) to Harold Cohen’s AARON (1972) and Herbert W. Franke’s MONDRIAN (1979), and works by Joseph Nechvatal, Ken Goldberg, Matthias Groebel, Roman Verostko, and many others through the 1980s and 1990s. In 1995, Verostko and fellow artist Jean-Pierre Hérbert founded the Algorists, a group working — you guessed it — with algorithms. Most, if not all, of these artists were born in the 1920s and 1930s.

In other words, as long as we’ve had machines, computers, and software, artists have been using them to make art. And as long as they’ve been doing so, we’ve been asking what is and isn’t art, and who (or what) is and isn’t an artist. But the recent increased visibility of artists working with automation, and AI in particular, is a predictable consequence of the increased availability and accessibility of the technology. The Diffusion of Innovation theory popularised by Everett Rogers is useful here to describe the bell curve of the adoption of technologies. It’s a tale as old as bits and bytes: governments spend billions of dollars investing in the research and development of technologies for defence applications, which eventually creep along to the bleeding edge of consumer markets. As technologies improve, becoming smaller, faster, and cheaper, demand and supply both rise, and they reach mass consumer markets. Technologies once used by soldiers both on and off the battlefield or only within the confines of a lab find their place in civilian lives, and so we have the internet, drones, and virtual reality (VR) headsets in our homes and businesses… and our art.

When these technologies are adopted by artists, the reception is always mixed. On the one hand, not many artists, creatives, and arts workers could or would claim to be conservative in the truest sense of the word — in wanting to conserve long-standing ways of thinking, being, and doing to the exclusion of all that is new. The world changes, and art changes with it. But, for all new technologies, from photography to holography, there are always plenty of detractors who maintain that their use in the arts is at best misguided and at worst an abomination. More often than not, these detractors identify as purists, and insist that they are simply concerned with good art. And aren’t we all? But overall, the more complex new technologies become, and the further away their use by artists looks like ‘the good old days’ (of, for the most part, skill-based painting, drawing, and sculpture, made by a solo genius), the more people balk, and the more we all argue about it.

Technologies that fall under the umbrella of AI are the most complex ones employed across the arts to date, and so there’s been plenty of arguing, especially in recent months. But while artists working with AI may not yet be mainstream, they are not exactly at the fringe either. AI artist Trevor Paglen is represented by Pace, and 98-year-old pioneer Molnár is represented by Thaddaeus Ropac. Leading AI artists Hito Steyerl, Jenna Sutela, and Ian Cheng have each exhibited in recent years at the Serpentine Galleries in London, while Refik Anadol’s large-scale screen-based, projected, and immersive works have been presented at König Galerie in Berlin, Centre Pompidou in Paris, National Gallery of Victoria in Melbourne, the Frank Gehry-designed Walt Disney Concert Hall in Los Angeles, and as part of La Biennale Architettura in Venice in 2021. Institutional shows — including Chance in the Age of Control at the Victoria and Albert Museum in London in 2018, and retrospectives for Molnár at the Beall Centre in California and fellow pioneer Franke at the Francisco Carolinum in Linz — have been unearthing and recontextualising pioneering works for new and eager audiences.

It is important to note here that there is no shared framework or set of definitions across the field of AI. For our purposes here, it will suffice to view automation on one end of a spectrum of autonomy and complexity, at which the artists working from the 1960s through the 2010s generally reside. At the midway point, we can place existing ‘narrow’ (specific and limited) forms of AI, including Midjourney and DALL·E. At the other end, we can place ‘general’ AI — AI that is flexible, independent, self-sufficient and, arguably, ‘human-like’.

But, is it Art?

DALL·E and Midjourney are just two in a sea of new AI-powered text-to-image tools hitting the internet and creative markets in waves since early 2021. Others include Imagen and Parti, both from Google’s Brain Team, and DreamStudio and Stable Diffusion, both from Stability AI, as well as two-dozen-odd other tools of varying levels of sophistication. Even TikTok and Meta have entered the fray, with an AI greenscreen feature and Make-a-Scene respectively. These tools belong to a subset of AI known as ‘generative’ AI, and in the eyes of believers they create what is known as ‘generative art’. Generative systems of AI are, in simplest terms, ones that have been ‘trained’ on large sets of data — in these cases on text-image pairings, and lots of them. The latest version of DALL·E, for example, was fed 250 million images and their text descriptions. Through an advanced type of AI termed ‘deep learning’ (a subset of ‘machine learning’), DALL·E then learned the relationships between images and words. Now, taking text (or ‘natural language’) prompts from users (e.g., “a robot hand drawing”), DALL·E generates new images. Voilá.

There are issues with and limitations to the methodologies of generative AI, of which existing AI-powered text-to-image tools are just one example. The first issue is the opacity of processes — both how an AI model is trained and how it produces new outputs. (This is commonly referred to as the ‘black box’ problem of AI.) Second is the sourcing of the data used for the training process — who or what the data belongs to and what permissions have been given for its use. Third is the notorious bias of these and most AI systems. Early tests of the latest version of DALL·E, for example, showed that it tended to generate images of white men and hypersexualised images of women, and that it reinforced racial stereotypes. Fourth is the rapid commercialisation of these platforms, in which for-profit tech companies take, for example, every available image ever created by mankind and scrape these images into proprietary data sets to which they sell access. At best, this might be considered extractive capitalism; at worst, automated plagiarism. Fifth is in whom, or in what, authorship, ownership, and copyright are vested, and where the proceeds from commercial use end up.

Humans Need Not Apply

In the art world, the debate surrounding the use of these tools centres on whether the outputs can be considered art (and, if so, who or what might be considered the artist). Artists and enthusiasts have divided themselves into factions online, arguing their relative corners across Twitter, Discord, Reddit, Substack, and Medium. Humans are algorithmic beings, processing information and churning out art in much the same way as these systems, argues one group. These outputs are statistical imitations of art — data-crunching rather than creating, argues a second group. Prompting these tools is an art form of its own, argues a third. There’s a difference between generating images and generating art, argues another. But if an audience can’t tell the difference between images generated by an AI tool and those created by a human artist, what’s the difference, argues yet another.

There are several precedents for the latter, beyond DALL·E and its peers. In 2017, for example, Ahmed Elgammal, Binchen Liu, Mohamed Elhoseiny, and Mariane Mazzone from Rutgers University’s Art and Artificial Intelligence Laboratory presented their Creative Adversarial Network (CAN) project at Art Basel in Switzerland. The images generated by CAN, a model trained on 80,000 images of predominantly Western artworks from the 15th through 20th centuries, were presented alongside 50 artworks by artists including Andy Warhol, Leonardo Drew, and David Smith. 75% of the time, the 18 people surveyed believed that the AI-generated images had been created by a human being. 85% of the time, the AI-generated images were believed to be Abstract Expressionist artworks. The robustness of the methodology of this particular study is of less interest than the phenomenon in general, only because of the recent ubiquity of the debate. That is, if people see AI-generated images as art, and are moved by them, then what? What do we need human artists for?

For Swedish artist Jonas Lund, DALL·E, Midjourney and other existing forms of AI “cannot be art without an artist … Without an artist, it’s not art, it’s something else.” In other words, just because the outputs of these sophisticated AI systems are images, doesn’t mean they’re art. Humans are, after all, liable to pareidolia — imposing form and meaning where there is none, like seeing the face of Jesus Christ in a piece of toast. Artworks need intention and meaning, and they need an artist. “But it’s not so important if it is or isn’t art,” Lund continues, over Zoom. “It’s very easy for it to become art — all that’s required for it to become art is that an artist claims it as art … It doesn’t mean it’s good art, and it doesn’t mean it has any effect, but it can still be art.” This line of reasoning holds for AI in all of its current forms, which are generally considered ‘narrow’ rather than ‘general’. So, what happens when the AI becomes general — so advanced as to operate entirely independently and self-sufficiently? Such AI could no longer be seen as merely an artwork’s medium, or an artist’s tool or collaborator. Within our existing philosophical frameworks, wouldn’t it be considered an artist in its own right?

Artists working in and with AI are largely uninterested in these questions. They are purely theoretical and, in the eyes of many, that is unlikely to change in our lifetimes — if ever. For Lund, for example, there are more pressing questions, and AI and automation are simply tools with which he works to explore them. Lund’s MVP (Most Valuable Painting), for example, is an online participatory project examining the role of ‘value’ in art. 512 individual digital paintings by Lund change and evolve according to likeability and sales. Powered by a ‘fitness’ algorithm, these artworks ‘optimise’ themselves to become more ‘desirable’. The 512th painting becomes the Most Valuable Painting and a record of the aesthetic value of the 511 paintings sold before it, as determined by their audience and collectors. At mvp.art, Lund tracks the evolution of each painting over the course of its iterations (or ‘generations’).

But only a small proportion of artists work in and with AI. So, while these artists may be relatively unfazed by recent developments (and by larger philosophical questions), others are a bit more anxious. Artists who work with little if any technology seem especially concerned — fear of the unknown, perhaps, stoked by media headlines and online discourse. But most concerned of all are professionals in the creative industries — including, but not limited to, illustrators, animators, concept artists, and graphic designers. These creative jobs differ from those of artists in that they are predicated on working to briefs and delivering to clients. They do not strive for the intentionality, socio-historical context, and depth of meaning of works presented as capital-a Art. Because of this, professionals in the creative industries assert that they are more easily replaced by sophisticated AI-powered text-to-image generators. As yet, DALL·E, Midjourney and co. are not sophisticated enough to replace human professionals entirely, who are still required to fix mistakes and finish the job. If (and when) the outputs from these tools become serviceable without human intervention, what happens then? Graphic designer wanted; humans need not apply.

‘Productivity’ and ‘Progress’

As they say, the robots are coming. But it’s important to note that, relatively speaking, artists and creatives more broadly have had to deal with very little disruption and threat to their livelihoods from AI and automation to date. The arts have been one of the last dominoes left standing, while advances in software and hardware (including but not limited to AI) have reduced demand for physical and mental human labour across other industries and professions. Capitalism asserts that the subsequent gains in productivity will, at least in theory, be redistributed through corporate taxation, freeing humans up to complete more advanced and complex labour. But the ability to create art, ostensibly a function of our unique and sophisticated human experience, has long been held up and apart from all this as one of several mechanisms for testing how ‘advanced’ (read: how ‘human’) an AI system is. First, they came for the chess, Go, and Jeopardy players, and we said nothing. So, what now? What do terms like ‘productivity’ and ‘progress’ mean in art? What are the benefits, how are they distributed, and where does this leave artists and creatives? Is this the Darwinian evolution of art, or one of the horsemen of the apocalypse?

Of course, AI is no monolith, so any ‘productivity’ and ‘progress’ enabled by AI in the arts looks different depending on what is used, and how. Bosch Bot and Libby Heaney’s Ent-, for example, both take Hieronymus Bosch’s three-panel late-15th-century masterpiece The Garden of Earthly Delights as their source and subject. British artist and quantum physicist Heaney’s Ent-, commissioned by German foundation Light Art Space and exhibited at the Schering Stiftung in Berlin and the Arebyte in London in 2022, is an immersive installation employing AI, quantum computing, and gaming technology. Reimagining the central panel of The Garden of Earthly Delights using Heaney’s own watercolours of Bosch’s oil painting, the installation posits that technology — including quantum computing and quantum machine learning — is its own kind of faith or religion. Meanwhile, Bosch Bot (@boschbot on both Twitter and Instagram), a ‘museum bot’ created by IT consultant Nig Thomas, is a curatorial tool engaging new and existing audiences. Presumably working from the 30,000-pixels-wide .jpeg of The Garden of Earthly Delights made available for free online via the Museo del Prado, Bosch Bot randomly selects and tweets a section of the painting. No-one puts it better than Gabrielle de le Puente of The White Pube, who wrote: “I love that the irony does and doesn’t honour the original painting … Bosch Bot has baited me into consuming actual art history knowledge. It has tricked me into a greater enjoyment of the work. I am so mad! I love it!” Though one is an artwork and another a curatorial tool, side-by-side, in -Ent and in Bosch Bot, we see the same source material reinterpreted in different ways through different types of AI to different ends.

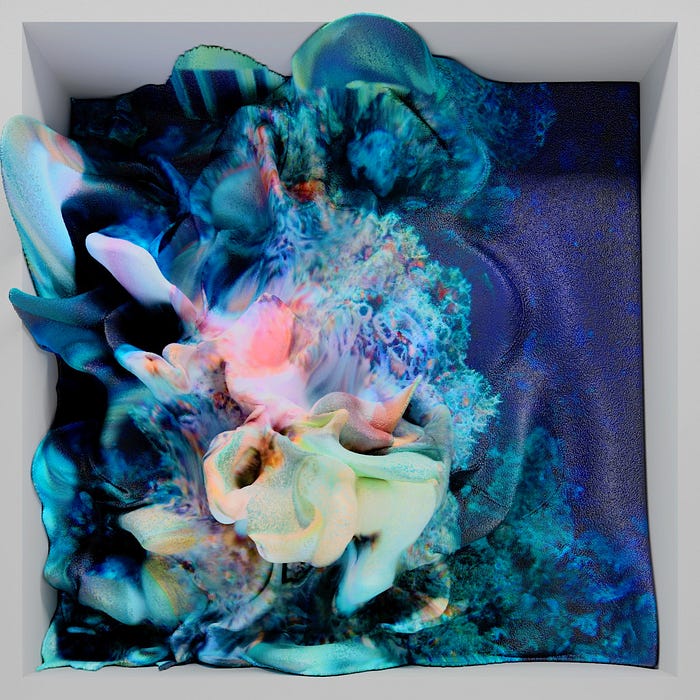

The ability to work with and investigate ideas through massive data sets is one way that ‘productivity’ and ‘progress’ through AI manifests in the arts. Human beings can’t, for example, look at and analyse tens of millions of individual images. Since 2013, Turkish artist Refik Anadol has been working with different Generative Adversarial Network (GAN) algorithms to do just this. Creating datasets from as many as 200 million publicly-available images of everything from coral reefs to outer space, Anadol applies multiple AI processes to generate mesmerising ‘data sculptures’. In his Machine Hallucinations series, Anadol visualises and explores the ‘collective memory’ held in these massive data sets. (The term ‘hallucinate’ in the title of these works is also a metaphor for human consciousness, anthropomorphising their AI systems.) Through his practice, Anadol is “trying to find the poetry inside those datasets, to create a new meaning beyond what data means.”

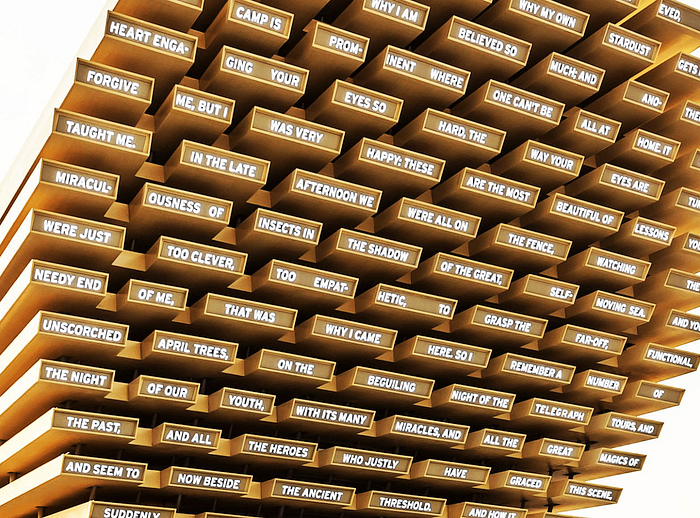

Artists are investigating concepts of collective consciousness, experience, and memory anew through AI, empowered by the ‘productivity’ and/or ‘progress’ of processing and interacting with data in real time, with participation from audiences and dynamic outputs. British artist Es Devlin’s Poem Pavilion, presented at the delayed World EXPO 2020 in Dubai in 2021, for example, developed from the artist’s explorations of ‘social sculpture’, first prompted by Hans Ulrich Obrist. Poem Pavilion presents machine-generated poetry on a 20-metre-high timber conical structure designed to resemble a musical instrument. The poems are generated from words ‘donated’ by visitors, which are processed using the machine learning language model GPT-2. The cumulative collective poem then illuminates the structure’s façade in both English and Arabic. Interaction through movement and dance is also fertile ground for artists working with AI, including Swedish-Finnish conceptual art duo Ida Jonsson and Simon Saarinen. Their moving image work Orpheus in the Metaverse (2020) explores what image-manipulation technologies, including those powered by AI (deepfakes, for example), might mean for our future. In the computer-generated virtual ballet performance, the movements of an amateur dancer are reconfigured by AI, glitching into the leaps and pirouettes of a ballerino.

For Botto — a bot, or software robot, designed to automate tasks and often to simulate humans using multiple AI algorithms — ‘productivity’ is about output, as it churns out 350 new images each week using similar methodologies to those employed by AI-powered text-to-image generators. Botto is billed as a DAA, or Decentralised Autonomous Artist, riffing on the term DAO, or Decentralised Autonomous Organisation. Without getting into the weeds, Botto is described this way because it is attached to a DAO. Each week, once Botto produces its 350 new images, its DAO’s 5,000 members vote for their favourites. The top images are minted as NFTs and auctioned off on SuperRare. In December last year, six of Botto’s NFTs were sold for US$1.3 million. Botto is the brainchild of German artist Mario Klingemann, who was interviewed alongside his creation by Harmon Leon for SuperRare Magazine in February of 2022. But how does an ‘AI artist’ answer an interviewer’s questions, given the limitations of current forms of AI, you might ask. Klingemann fed the interview questions to GPT-3, a natural language AI model, using a methodology similar to that employed by Botto in creating images. In a comical though entirely sincere exchange, the interviewer asks Botto the meaning of its name. Botto offers that it is a pun on the word ‘booty’, referring to pirate treasure and/or to buttocks. Klingemann interrupts to ask Botto if its name doesn’t come from the term ‘bot’. “What am I, a bot?” Botto asks in response.

The answer to that question is that it probably depends on who you ask. Botto is not advanced enough to be conscious or sentient, but neither is Google’s latest LaMDA chatbot, and LaMDA was mistaken by one of its own senior engineers as being sentient. (He was summarily dismissed.) Different people working with the same forms of AI experience different things. Some say it seems to have a soul, others that it is just a computer program. But the ‘disembodied’ forms of AI discussed so far (all software, confined to computers) don’t seem to be perceived as any less threatening to artists, and humans, than ‘embodied’ versions of AI. The former terrifies people because they don’t have a body, and the latter terrifies people because they do. Either way, they threaten our anthropocentric view of the world.

Death of the Artist?

Increasingly, artists are also working with embodied forms of AI, combining software with hardware. In other words, robots. There is a spectrum, here, of the use of robotics in art. At one end are artists working in the tradition of algorithmic drawing and the ‘art machines’ of the 1960s through 1990s. In this camp is Australian artist James Dodd, whose ongoing Painting Mill project spins abstractions in acrylic on canvas and board. Such projects are largely static machines with moving components. In other artworks — including Yuan and Yu’s Can’t Help Myself — robots begin to take on the qualities of living forms. Further still along the spectrum of embodied AI, Polish-American artist Agnieszka Pilat paints alongside two robots — Spot, from Boston Dynamics, and Digit, from Agility Robotics. The former is a sunshine-yellow dog-like robot. If you were cynically-inclined, you might wonder if this is the result of some clever PR outreach by Boston Dynamics in an effort to put a friendlier face on what regrettably resembles the ruthless animaloids from Black Mirror’s Metalhead episode. This might equally be the case for Digit, a headless humanoid in bright turquoise, silver, and black. For her part, Pilat describes herself as master and Spot and Digit as apprentices labouring under her as, for example, Leonardo da Vinci did under Andrea del Verrocchio in the 15th century.

Such ostensible human supremacy, over AI as well as over all other forms of life on earth, is interrogated by Swedish multidisciplinary artist Arvida Byström. Her latest exhibition, A Doll’s House, at Galleri Format in Malmö, includes A Cybernetic Doll’s House, a performance piece by the artist with Realdolls AI sex doll Harmony, in which she asks the robot, dressed as her döppelganger, questions provided by the audience. In another photographic work from the exhibition, Byström poses with Harmony in the format of Michelangelo’s Pietà. Byström is the Virgin Mary and Harmony is Jesus Christ. Will Byström be surpassed by Harmony, and humans by AI, much as Mary was by her son? Is one doomed to perish in the arms of the other? In the artist’s restaging, Byström cradles Harmony in her arms, inverting the predicted outcomes of AI.

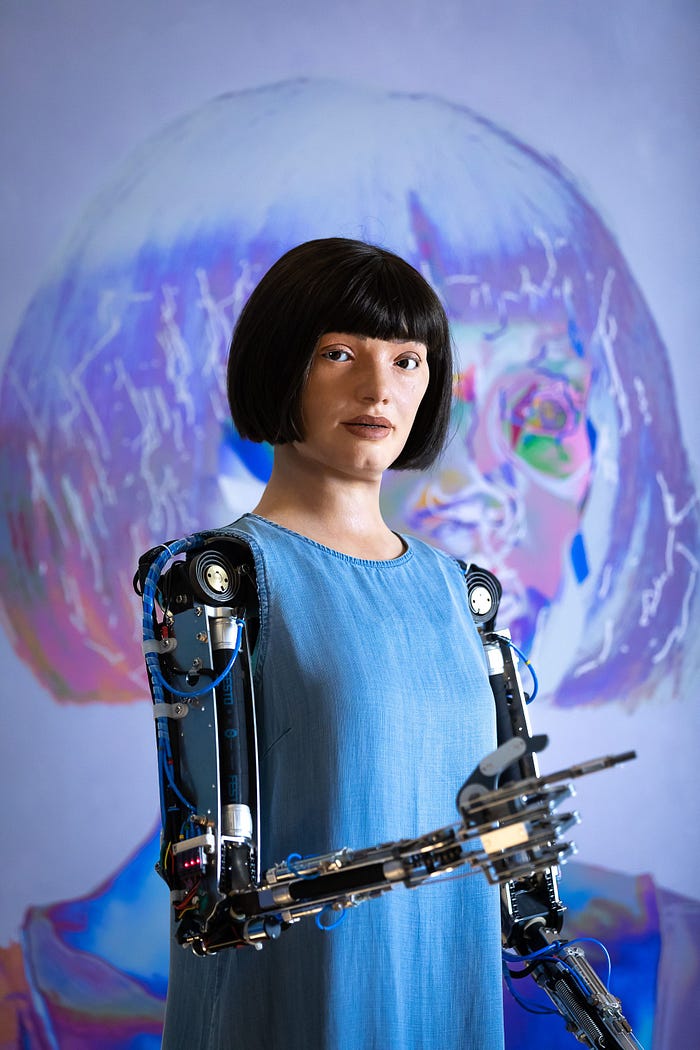

From Pygmalion to Pinocchio, and Lars and the Real Girl to A Doll’s House, artists anthropomorphise figures to ask what it means to be human. This question is pushed to its present limits through two ‘ultra-realistic’ humanoid robots billed as artists: Sophia (from Hanson Robotics) and Ai-Da (from Aidan Meller, Lucy Seal, Engineered Arts, Salah El Abd, and Ziad Abass). The relative realism of Sophia and Ai-Da has rendered them high-profile projects — Ai-Da exhibited in La Biennale di Venezia 2022, in Leaping into the Metaverse at the Concilio Europeo dell’Arte. Embodying AI in this way — in Ai-Da and Sophia, but also in Spot, Digit, and others — might be seen as an effort to ‘legitimise’ art-and-AI, with visible manual labour and a physical figure on which to confer ‘artist’ status. Aren’t the outputs art, if they were created by an artist?

In turn, this raises the question of whether it’s Sophia, Ai-Da, Spot, and Digit that are the artists, if it’s the teams behind them, if it’s their engineers, or some combination of them all. Ask an artist, an engineer, a philosopher, and a lawyer, and you’ll get different answers. Ask another artist, another engineer, another philosopher, and another lawyer, and you’ll get different answers again. Maybe the better question, to paraphrase Old El Paso, is why not all? Creative teams have precedents across music, film, fashion, and beyond. But for the art world, it would be an almost radical expansion of the concept of the ‘artist’, as it evolved from the pre-Enlightenment ‘artisan’ into the singular artistic genius of the 18th and 19th centuries, and the professional artist figure of the 20th century through today.

Modern, postmodern, and contemporary art challenge the notion that technical artistic skill plus labour equals art (“I could do that” “Yeah, but you didn’t”). “The idea becomes the machine that makes the art,” wrote Sol LeWitt. But when we introduce actual machines, and then AI, things get complicated. Take Takashi Murakami, Jeff Koons, and Damien Hirst, for example, who conceptualise works that are then ‘realised’ by their studio assistants. But when works are conceptualised or prompted by humans, and ‘realised’ by embodied AI, they are attributed to the latter (Sophia, Ai-Da, Spot, Digit etc.). And the outputs of AI tools (DALL·E, Midjourney etc.) are attributed to the end users that prompted them — not the AI, or its human engineers. Figure that out. In each case, it is a question of discerning how autonomous a given piece of AI is, and the ways in which contributions are made at different points in the creative process.

But all of this operates under a humanist perspective on agency, consciousness and creativity, where to be more ‘advanced’ is to be more ‘human’. Which is why it is so interesting that humans seem preoccupied with engaging embodied forms of AI in self-portraiture — Sophia, Ai-Da, Spot, and Digit included. We create technologies and are compelled to anthropomorphise them, and then to ask them to see and record their own image. Dance, monkey, dance. What are we playing at, and what are they even showing us? Is it them, or is it us — a selfie through an Inspector Gadget robot arm? There is something about augmentation through duplication here; the ostensible optimisation promised by technologies, including AI. God made us in their own image, and we remake the world in ours. Are we playing God, remaking God, or something else? We are equal parts terrified and fascinated by what we create. Why do we continue to do it? Are we facing our fears, or are we making them come true?

Human After All

Curiosity (and competitiveness) took us to the moon, and from the moon we saw the Great Wall of China. AI might be viewed in much the same way, in the driving forces behind its advancement, and that it offers to take us outside of ourselves, to see things anew. As it advances, AI forces us to ask ourselves what separates us from atoms, from inanimate objects, from nature, from other animals, from technology, and from machines. Is it consciousness, sentience, or conscience? Is it the ability to create? But if birds can sing, if dolphins and whales call each other by their names, if elephants dance, if monkeys take selfies, if dogs, pigs, penguins, and the machines we make can paint, then what? Do we say that our ability to create art, over and above simple creativity, lies in our ability to reflect on and convey the conditions of existence?

We humans are the sum of our society, and our experiences. Our stories — so often our art — are who we are. This is what we tell ourselves. The superiority of the human brain and the imbuing of the spirit or soul primarily in humans by dominant religions over the course of human history place us at the centre of the universe. These are the same reasons we find AI and its potential creativity so threatening. Is it really so simple to manufacture and automate creativity? “Of all that is written, I love only what a person hath written with their blood. Write with blood, and thou wilt find that blood is spirit,” wrote Friedrich Nietzsche in Thus Spoke Zarathustra. What blood, what sacrifice, does art ask of a machine? What is a machine’s story, or its experience? And what happens when we create technologies so sophisticated that they come to deserve human rights — from the rights to freedom from slavery and freedom of thought to the rights to consent, authorship, ownership, and remuneration? And, then, if there’s nothing special about being human, where does that leave us?

Our struggle to process advancing AI, within and beyond the arts, is a function of doing so through an anthropocentric view of existence. Mainstream ways of seeing and understanding the world don’t, at present, have a lot of room for non-human entities with equal or superior physical and intellectual capabilities. Our anthropocentric view of existence is all that we have — a weighted blanket settling over our fears, quieting the mind enough to get on with today. In the face of aliens and asteroids and black holes and deep-sea creatures with fleshy lightbulbs dangling from their foreheads and planes that vanish into thin air, human supremacy gives us a false sense of control and predictability. We need to believe that humans are special, and we need to believe that we are special. When AI threatens this, we swing between optimism and despair, looking for anything to cling to. On each side, we double down in our ‘humanness’, in the one thing that ostensibly separates us from all else: our art. In our optimism, we create Sophia, Ai-Da, Spot, Digit, DALL·E, and Midjourney, and in our despair, we grapple with hypotheticals, and forewarn (see: Bladerunner, Her, Ex Machina, all of science fiction, and much of storytelling generally). The great irony here is that the more sophisticated and complex these technologies become, and the more they then threaten our singular status as artists, the more we engage in artistic creation to process these anxieties. That is, the more afraid and confused we are about being replaced as artists, the more art we make about it. Like the double ouroboros, we are locked in a chase, each in pursuit of the other. And so, we know, this can only be the beginning.

— This essay was originally published in issue 40 of VAULT in November 2022.

Harriet Flavel is an arts consultant and writer based in London.